Specta-Seppuku Hype, or, Why Sam Altman really called for urgent regulation of AI at the AI Impact Summit

The fourth annual AI Impact Summit took place in India from February 16-20 this year and was a star-studded event hosting Big Tech heavyweights including OpenAI, Nvidia, Anthropic, Google and various Heads of State.

Deals were made and buzzwords were thrown around liberally (shout-out to The Maybe for their useful and fun buzzword bingo card) but one thing that stood out was Open AI CEO Sam Altman’s calls for “urgent” regulation in the AI industry.

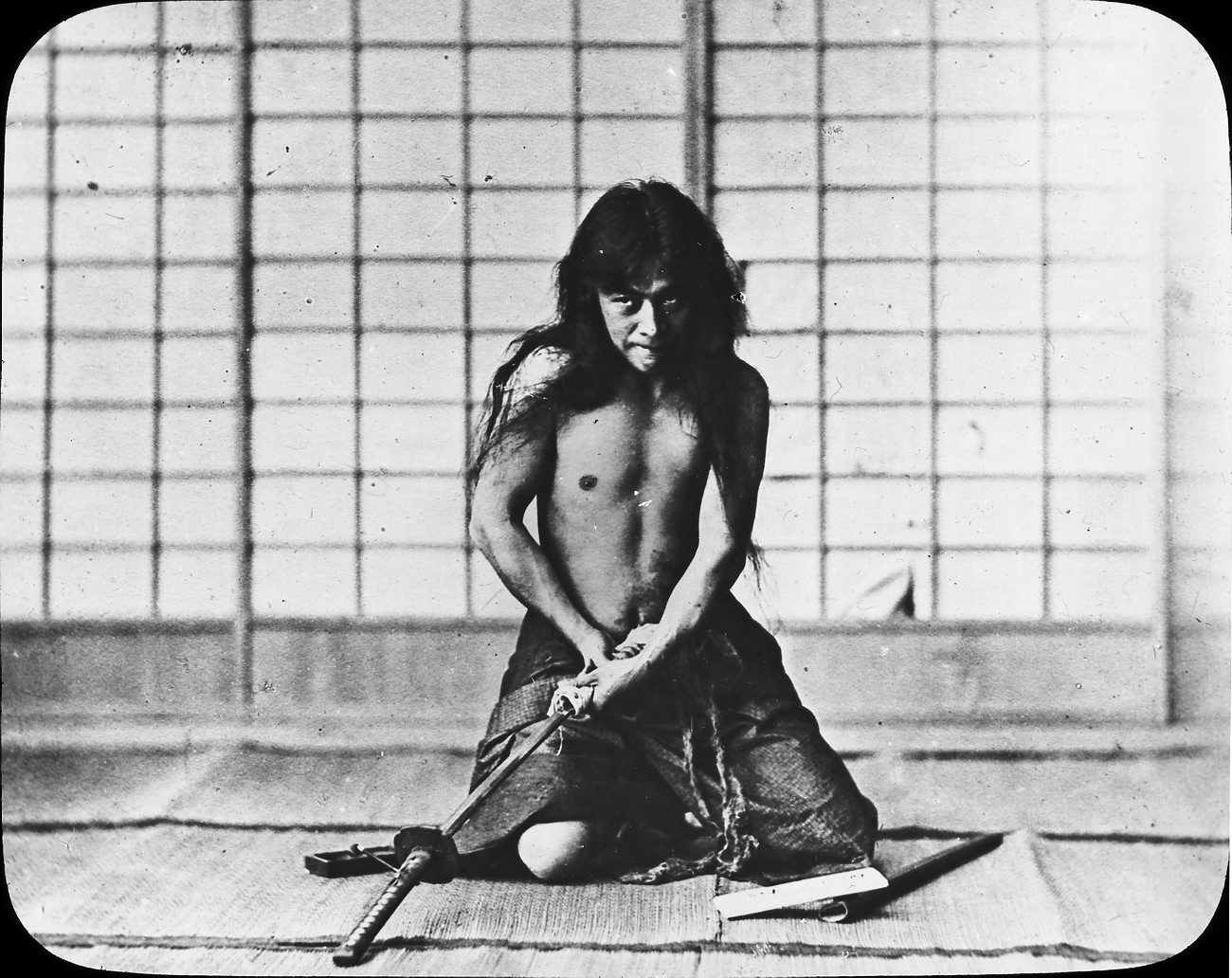

We hereby submit the term “specta-seppuku hype” to describe what is going on: a prestigious tech figure commits symbolic ritualistic suicide (the Japanese concept of seppuku) by criticising their own inventions - not in order to protect one’s dignity as in the Japanese tradition, but to create spectacular hype by drawing public attention based on their humble appearance - usually maximising profit.

In this text, we are analysing Altman’s case and historicise the concept of specta-seppuku as a repeated phenomenon since the resurgence of AI debates roughly as of 2010.

AFP reports that Altman said, “We expect the world may need something like the IAEA for international coordination of AI," with the ability to "rapidly respond to changing circumstances” IAEA is the International Atomic Energy Agency.

It’s difficult to reconcile these comments. Why? Because Altman is asking the world for urgent AI oversight, while his company constantly saturates global markets with new products. The conversation about artificial intelligence (AI) never seems to settle. One reason is the recurring spectacle of its most prominent creators urging society to regulate, or even fear, the technology, often in the very moments they are driving it forward.

Looking back over the last ten years, one can trace a throughline: from Elon Musk’s fervid calls for government action against AI dangers in the 2010s, to Geoffrey Hinton’s more recent disarmingly dramatic public doubts about his own life’s work, and now to Sam Altman, who seems to be following the same playbook. Each phase in this sequence reveals another iteration of what has been termed as “counter-hype” or, as this article proposes, “specta-seppuku” hype - the spectacle of a field’s most decorated insiders warning about their own creations, puzzling analysts as to their surprising conscientiousness and gaining trust from the public as they demonstrate what appears as humility. As known, such humility can be manufactured, precisely in order to gain trust from publics.

The approach has become a defining feature of the current AI times – a complicated ritual where warning and self-promotion go hand in hand.

From Musk to Hinton to Altman

Back in 2018, as AI started to surface in daily life and attract enormous investment, Elon Musk began issuing dire warnings about its dangers. He claimed AI could “spell the end of humanity” and was “potentially more dangerous than nukes.” Because Musk was already established as a tech visionary, these warnings were widely picked up by the media and taken seriously in public discussion.

His calls for early and urgent regulation, strikingly, were never particularly specific. When it came to what exactly should be regulated and how, his proposals were hazy, providing little direct guidance for policymakers. This ambiguity was not coincidental. It created a regulatory vacuum that favored those already at the center of the AI world.

While Musk spoke passionately about the need for safeguards, he was also helping accelerate AI’s development. This dual role allowed him, and companies like OpenAI, to appear as both pioneers and as champions of responsible innovation. Musk thus managed to position himself and his allies at the negotiating table if and when real regulation came. Meanwhile, other AI experts (such as Rodney Brooks or Yann LeCun) and industry insiders pushed back.

In all of this, regulation became a dramatic performance designed to generate public concern and nudge policymakers. At the same time, these sweeping and generally unspecific calls for regulation opened the door for entrenched players to maintain a privileged position in the market. While Musk’s doomsday language drew public and political attention, Musk continued to invest heavily in AI, co-founding OpenAI in 2014 with Peter Thiel.

Onto Geoffrey Hinton. Hinton spent decades on neural networks and machine learning, advocating for approaches that were commonly marginalised among AI circles in his early career. From his days as a “black sheep” under the skeptical gaze of his mentors and peers to the moment when deep learning and neural networks became the backbone of AI research, Hinton’s journey mirrors AI’s cycles of boom and winter. After his group’s success at the 2011 ImageNet competition and his eventual baptism by the media as a “godfather of AI” when won the ACM Turing Award in 2018 (ironically, after all holders of the previous AI paradigm were dead, signalling a complete Kuhnian shift), Hinton began to alert the public in 2023 that the very inventions he championed might bring risk or disaster.

Unlike Musk, Hinton’s public worries about AI have the weight of deep technical authority. When he expresses concern about uncontrolled systems, it resonates as the voice of direct experience - the field’s own canary warning of danger. Hinton’s willingness to dramatise his fears can be understood as “specta-seppuku” - a public, almost ritual display where the expert both confesses and repents, amplifying worry while maintaining an aura of leadership. This move reframes his status: he has both “the poison” and “the remedy,” to quote the Prodigy, positioning himself as the one who knows best how to manage what he helped unleash.

He might have also wished to follow a trajectory similar to cyberneticist Norbert Wiener's when he published, in 1950, his book 'The Human Use of Human Beings,' both advocating for automation but also warning about its dangers; or Joseph Weizenbaum's similar attempt with his 1976 'Computer Power and Human Reason: From Judgment to Calculation,' written in the aftermath of public attention to his own early conversational chatbot ELIZA. Prominent AI research Stuart Russell was a mid-2010s example, when he became Stephen Hawking's co-author for an Independent article heralding the end of humanity after AI - and eventually publishing his 2019 book 'Human Compatible: AI and the Problem of Control.' Or, more recently, a number of well-known natural language processing (NLP) experts who have initially helped the construction of mainstream AI systems, currently capitalising on criticising them. One can observe a criti-hype pattern with certainty here that scales up to the extent or ritualistic public stunt, a routine in which Hinton is the latest example fulfilling the archetype of a responsible inventor - thus protecting from the other AI archetype: that of the "the mad scholar who seeks dangerous knowledge and who desires to supersede God."

This routine set the stage for the next act. Enter Sam Altman, the current CEO of OpenAI, who in 2026 steps to the center as the most recent - and perhaps most influential - voice in this pattern. Altman’s warnings about AI’s dangers and the need for urgent, globally coordinated regulation come just as OpenAI’s products reach hundreds of millions of users and its partnerships sweep through entire continents. His call for an “IAEA for AI” sounds high-minded, but the effect is to lock in the advantages of established players - those with the scale, resources, and political access to shape whatever oversight emerges.

The Culture of Forgetting

Cycles of hype around AI are not random. They function as a dialectic - a push and pull between expectation and expertise. When outside experts such as cosmologists or entrepreneurs like Hawking or Musk once set the narrative, AI researchers would contest their legitimacy, saying they did not understand the field’s technical realities. This process relied on the drama of outsiders sounding alarm bells.

Recent years have produced a novel twist. Now, it is core researchers themselves - those with the most “inside” view - who take the stage to warn of doom. Hinton, for example, borrows some of the language of the critique that once frustrated his circles when non-experts dominated the debate - except now he can speak as a genuine inventor, not just a commentator. Altman similarly issues public cautions even as he steers one of the most powerful and fastest-growing AI enterprises.

This shift creates a spectacle with enormous influence. When the creators of AI systems themselves voice their anxieties, media coverage intensifies, politicians feel compelled to act, and funding priorities shift. Everyone wants to hear from the person who sings the Prodigy line: “I got the poison, I got the remedy.” The result is that major players reinforce their authority in both directions - warning about dangers and presenting themselves as best placed to resolve them.

Such cycles are further entrenched by a kind of cultural forgetfulness. Recall, for example, how Bill Gates in 2015 warned that AI could spell humanity’s doom (January 28, 2015), only to declare exactly three years later that AI’s biggest impact might just be that everyone takes longer vacations (January 29, 2018). Media coverage rarely reconciles these wild swings. Instead, the conversation is reset, ready for the next existential or utopian pronouncement.

This collective forgetfulness keeps the regulation/deregulation performance alive. Each wave of AI hype is seeded by doomsaying or utopian claims from respected public figures, who call for regulation that seldom threatens their own projects, while simultaneously making it harder for rivals and newcomers to play.

Who Holds the Power to Define AI’s Future?

During key moments of AI hype, such public commentator statements are quoted, repeated, and, crucially, rarely challenged for technical depth. Headline after headline presents dire warnings from celebrated names, generating drama and urgency. Journalists, who need quotable authorities that the public recognises, often gravitate toward these big names, sidelining less charismatic but more technically knowledgeable AI insiders.

If history is any guide, the rituals of hype and warning will shape which voices governments trust, and which institutions collect new resources. Altman’s recent calls for regulation demonstrate perfectly how public urgency can morph into structures that ensure his own organisation has a seat at the top. Hinton’s transformation into the prophet who warns of his own work brings credibility and new audiences - ensuring that those who give the warning are also those best placed to manage the outcomes.

For those watching this cycle spiral unfold, the question should always be: who benefits? And who is really being protected by these warnings - society, or those who want to guard their lead in a rapidly changing field? As long as the spectacle remains, so does the temptation for those at the pinnacle of expertise to present themselves as both savior and potential culprit, maneuvering endlessly between alarm and assurance. Such specta-seppuku maneuvers are a usual tactic in strategic lying - so Hype Studies couldn't think of a better post for an April Fool's Day.

| Dr Vassilis Galanos SFHEA is Lecturer in Digital Work at the University of Stirling’s Business School. Vassilis investigates the history and sociology of artificial intelligence and the internet, is the co-author of the forthcoming book Internet, AI & Society: A Textbook for the Perplexed, and co-founder of AI Ethics & Society and the Hype Studies group. |

| Marché Arends is a South African investigative journalist committed to holding power to account. Her work has appeared in Africa Uncensored, The Continent, The Africa Report, Semafor Africa, The Daily Maverick and elsewhere. She was part of the 2025 cohort of Pulitzer Center AI Accountability Fellows and produced the investigation, "Fuelling the AGI Hype: The recruitment playbook to land Big Tech contracts" (published in Africa Uncensored). She is interested in human-centred stories that live at the intersection of tech, labour, and social justice. |